Special thanks to Sreeram Kannan for reviewing this post, and to Soubhik Deb for reviewing and editing this post!

Follow them @https://twitter.com/soubhik_deb, https://twitter.com/sreeramkannan

Intro

Eigenlayer is the coolest thing I have been learning about as of late in the scaling scene. It is pretty new and not live yet, no token, and is only starting to be talked about throughout the twitter sphere. Let's dive into what it is, how it works, who's behind it, and more.

Eigen is German for “your own.” Your own layer.

What is Eigenlayer?

Simply put, Eigenlayer transfers Ethereum’s economic security and trust to a middleware that needs it. Eigenlayer allows for the ability to re-stake your already staked ETH and provide security to other applications/middleware that have been deployed to Eigenlayer. This is termed as the restaking paradigm. By re-staking on new modules, re-stakers subject themselves to new slashing conditions, with the criteria of the slashing conditions potentially (and probably) differing between different middlewares. These restakers now participate in doing a particular task as specified by the middlewares. In return for re-staking their ETH and completing their tasks, re-stakers get rewards in the form of fees. You can get more reward for taking on more risk - a classic framework in investing. It is important to note that restaking in Eigenlayer is a totally opt-in mechanism. The reward and slashing conditions can be customized by the middleware offering it, and they can tailor these mechanisms to reflect their needs/wants. Slashing is done via smart contracts and happens on-chain. Anything that is subject to slashing using on-chain smart contracts is something you can build on Eigenlayer.

Mental Models

Sreeram: Eigenlayer is an extension protocol that can extend the scope of what ethereum can do. It allows for permissionless innovation all the way down the stack, instead of only at one layer.

David from Bankless: Eigenlayer is commoditizing ETH staking to make it more general purpose.

More from David: If we have trust we can have expressivity.

How to stake on Eigenlayer?

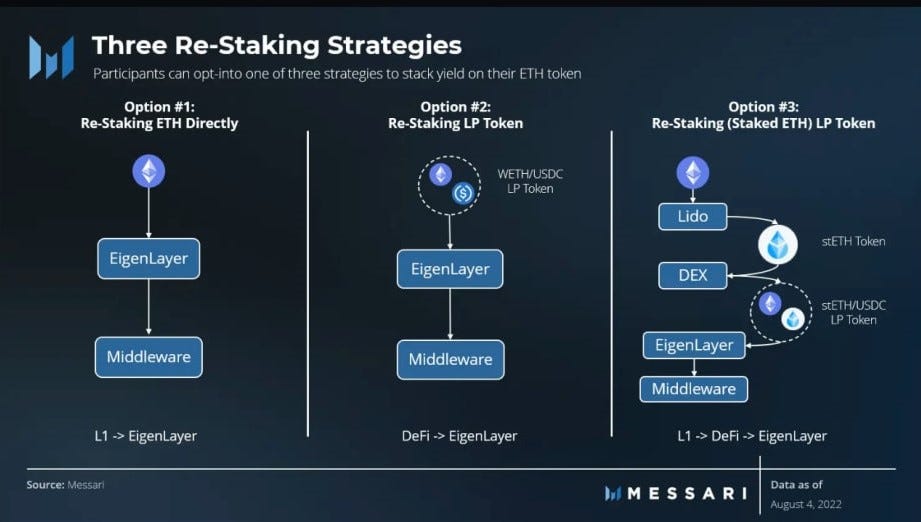

Three ways:

Re-staking ETH directly.

Re-staking LP token.

Re-staking (staked ETH) LP token.

Credit to Messari for the picture.

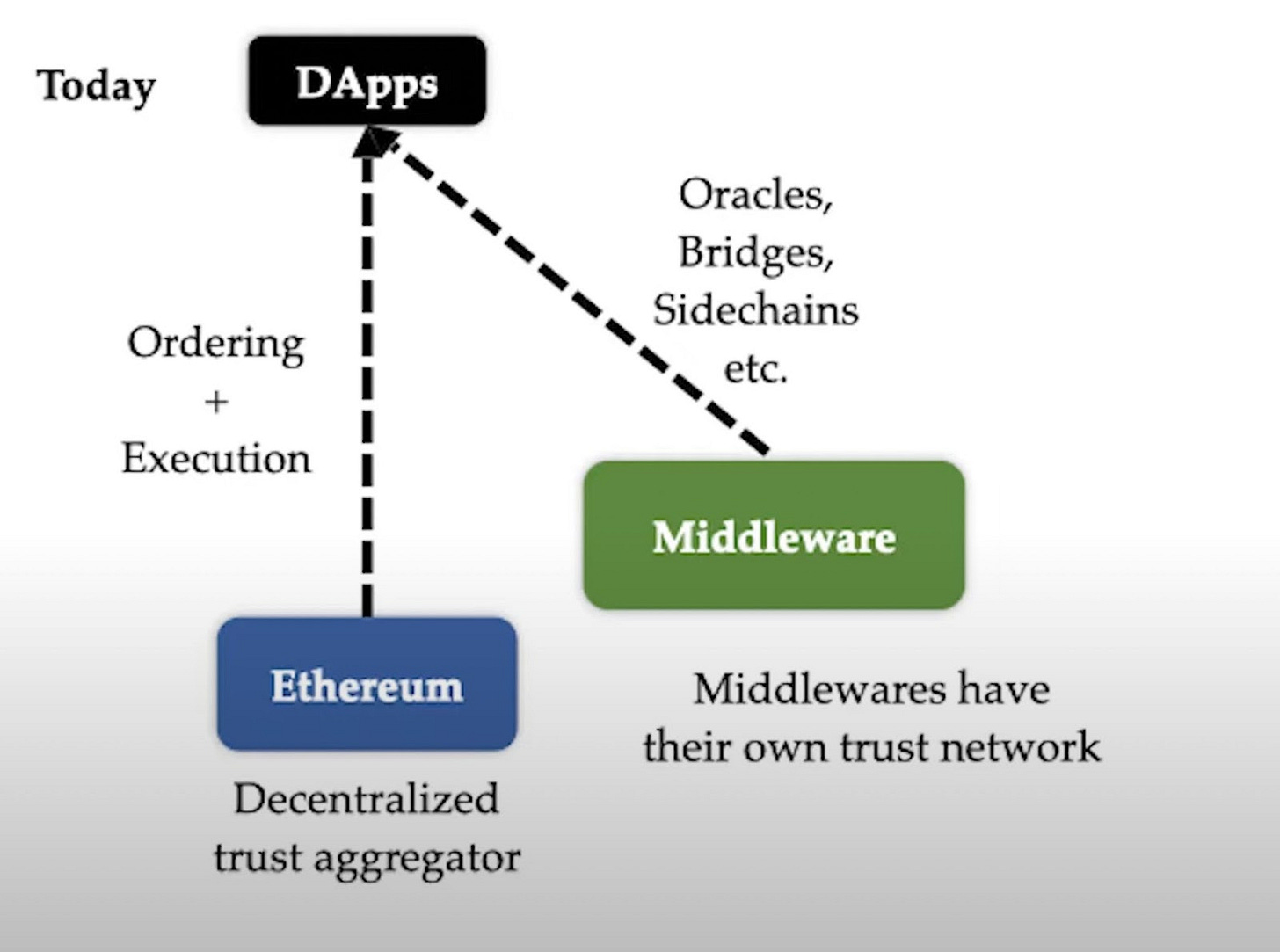

Eigenlayer can take us from this:

To this:

What these pictures show:

Picture 1: Several Trust Networks.

Picture 2: One Trust Network.

Currently, trust layers of different middlewares like oracles/L1s/etc are fragmented, and using Eigenlayer, this can be united. The goal is that instead of having middlewares trying to bootstrap their security by inflating away their own token, they can, through Eigenlayer, inherit security from the most trusted layer in the space, ethereum, to their own chain. In a manner of speaking, Eigenlayer pools security and middlewares can tap on this pooled security. Now, middleware developers can focus on developing their protocol, instead of worrying about building their own trust network, leading to more productivity.

Eigenlayer & Decentralization

One of the more interesting features of Eigenlayer is the incentivization of decentralization. Incentives run the world, so if we can incentivize decentralization, then we will see more decentralized networks spun up, which is very positive.

An example of a cool thing you can do with incentives on Eigenlayer: incentivize home stakers by paying them more than large pools. Having more home stakers improves censorship resistance.

Let's look at an example of incentivizing solo stakers: Today, large staking pools can collude to censor/include what transactions they want. Looking at

https://www.mevwatch.info/ → 78% of blocks post merge are OFAC compliant (see: https://home.treasury.gov/news/press-releases/jy0916). MEVwatch.info tracks the percentage of blocks built by OFAC compliant MEV-boost relays since the merge.

What's important to note is that uncensoring relays and/or home validators can still serve these “dirty” tornado transactions. The problem is, they don't get paid any extra fees for the extra value they are providing to the network. Eigenlayer allows protocols to customize however they want, and in this case, middlewares could be customized to pay home stakers more than large pools. Going back to my first point of incentivizing decentralization, this incentivizes home stakers to spin up a validator, which would result in better censorship resistance properties for the network.

What is EigenDA?

EigenDA is a data availability layer developed by the Eigenlayer team for optimistic and ZK rollups. It will be the first middleware built on top of Eigenlayer. A subset of the ethereum stakers who have joined Eigenlayer can opt-in to EigenDA (EigenDA is just a set of smart contracts and off-chain node software). Their job on EigenDA is to guarantee data availability.

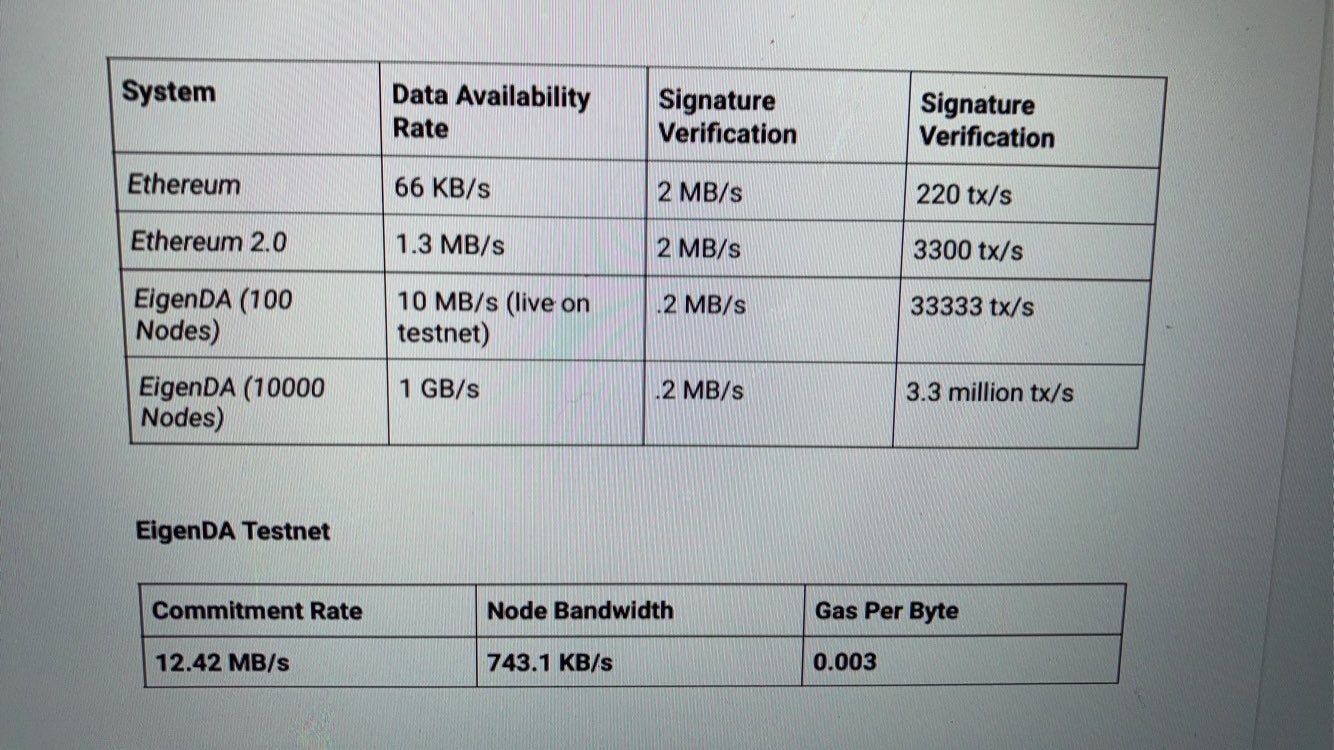

EigenDA is hyperscale.

Let's say there are “N” nodes; each node has a bandwidth of C MB/s.

EigenDA can reach a commitment rate of NC/2 mb/s throughput with majority security.

This is hyperscale data availability. There is no trade-off between security and scalability. As more and more nodes join you get more and more secure AND more scalable.

The project is similar to Celestia, as both provide a data availability solution. EigenDA appears to have an apparent advantage over Celestia - Celestia has to bootstrap their own new validator set, whereas EigenDA can utilize an already existing validator set from ethereum. Since Eigenlayer is only a DA solution, and Celestia is handling both consensus and DA, Eigenlayer can achieve higher throughput than its competitor. This fact brings important differences between the two architectures: on EigenDA each node has to download only a small encoded part of the data blob, whereas in Celestia each node has to download the whole data blob. Additionally, EigenDA uses unicast channels whereas Celestia uses P2P network which leads to network penalties.

Dual Staking Model

Dual staking model is how the name describes it: Let's say you already have your own token securing your network, but want to start using Eigenlayer. You can implement a dual staking model, and derive security from ETH staked on Eigenlayer along with your own token. Using a dual staking model lends utility to your own token while using ETH to hedge against security risk arising from the potential death spiral of your own token. Of course, middlewares would be able to customize the weights they give to each of the two quorums depending on their own requirements.

Customizability

Fields/Signatures/Code:

EigenDA is highly customizable. Let’s say you are using a certain elliptic curve and a proof system and you want to keep using them. Instead of having to use some other arbitrary thing, you can continue using the current setup, as long as you reuse the trust network. You can choose your own field, your own algebraic code, your own commitments, your own signatures, as long as you reuse the trust network.

Fast Proof Certification:

Today, zero knowledge systems suffer from proof latency because of cost. The verification cost is still high, so you can’t run a verification every block on ethereum. However, what you can do is send your proof to the EigenDA nodes. Since you can build an arbitrary distributed system on Eigenlayer, the nodes on there can verify your ZK proof - they immediately certify on a quorum that the proof is correct and then post a commitment on chain (which could be slashed later). This means you have a high speed state bridge that is hyper composable with ethereum. Every block, Eigenlayer will be writing commitments through a single merkle root back into ethereum. That means you can move data back and forth between rollups, compose different rollups, and do all kinds of interesting things on Eigenlayer.

Flexible Tokenomics:

EigenDA can allow for more flexible tokenomics. Since Eigenlayer is opt-in, you can pay fees in your own token if the other party is willing to take them. Opt-in changes everything. You can now pay fees via the Starknet or the zkSync token, or whatever token you want.

Sidenote: Data Storage vs Data Availability?

EigenDA is a blockchain that focuses on data availability whereas blockchains like Filecoin and Arweave are focused on the separate problem of data storage. (p.s - I’m bullish on AR).

Data availability is concerned with whether the data published in the latest block is available or not until settlement. This is distinctly different from data storage, which is concerned with storing data securely and providing guarantees that it can be accessed when needed.

These distinct focuses lead to differences between their target use cases. Data storage blockchains are particularly focused on providing a decentralized way for data to be stored and accessed. Conversely, EigenDA is designed to provide secure and scalable data availability for blockchains and specialized execution environments, like rollups.

I think that these two solutions (restaking and data availability) will work synergistically together and complement each other nicely.

Also it is nice that they are building towards the number one problem we need to get right for the next cycle, which is scalability.

What is the Roadmap?

Eigenlayer is currently building on an internal testnet, testing with a few integrations. They plan to have a more public facing testnet in the next 3-4 months, with mainnet planned to be launched 3-4 months after the testnet is released.

Initially, Eigenlayer will launch with only one service which is the data availability layer they are building (EigenDA). They want to slowly open it up from one service platform to a few partnered services, to then a permissionless system.

The first service is aimed to launch around mid-2023 with other services on-boarded in the months following.

Eigenlayer is currently building partnerships with staking providers, staking protocols, and other infrastructure builders in the ethereum ecosystem. It plans to secure collaboration with large staking pools to ensure the protocol launches with a healthy base of validators.

What’s the narrative?

The narrative for Eigenlayer is one centered around trust models and the modular blockchain shift. Building a neutral blockchain (a trust layer) like ethereum has done is really really really hard to do, along with being extremely expensive. Why is it that so many chains try to create their own trust layer when they can utilize the best and most trusted trust layer in the space (ethereum)? Well, they try to create their own trust layer because they need to - and this is where Eigenlayer comes in. Eigenlayer is looking to solve this problem by providing the ability to reuse your staked ethereum and provide security (trust) to another protocol, whether that be new middleware, new ordering protocols, new oracles, etc. This would allow new crypto protocols to reuse trust from ethereum instead of attempting to bootstrap their own, saving so much time and money. This extra time and money gives projects the freedom to innovate and actually build their business instead of wasting time and $ on building their own trust network.

Ethereum is the reason for the decoupling of trust and innovation. Ethereum pioneered this decoupling - trust is provided at a common layer while innovation happens at another layer. This is why anons can build massive DeFi protocols - building a financial product is one of the most trusted businesses you can build. However, the anon is not required to be trusted as trust is being borrowed from a common layer (ethereum).

So you might be thinking, why Eigenlayer? As I just explained, ethereum has already been able to decouple trust and innovation, so why do we need Eigenlayer? We need Eigenlayer because ethereum only allows for open innovation of Dapps on the platform. What happens when you want to build a new VM, or a new consensus protocol? You have to build your own blockchain - this is what gave rise to alt-L1s. This is fracturing trust into smaller groups. Eigenlayer can recombine this trust via ethereum and allow projects to build on top of that, eliminating the need to create their own trust network. Instead of trust being scattered across 10 L1s, it could all migrate to ethereum. This would massively increase the cost to attack ethereum, as unifying trust is better than fracturing trust. Eigenlayer is the connection between the trust layer and the innovation layer. That seems like a good business opportunity.

Eigenlayer is onboard with the transition to building blockchains in a modular architecture, as different teams and projects will aim to specialize throughout the different layers of the blockchain stack instead of building it all in house. Eigenlayer is building a data availability solution, called EigenDA. This strikes at the core of the modular narrative.

The modular narrative is reflected in the ongoing colossal shift happening in L1 (layer 1) blockchain architectures. We are moving away from a monolithic design, where consensus, data availability, and execution are tightly coupled (yesterday's ethereum), to a modular future, where execution is separated from data availability and consensus (e.g. today's ethereum, or future Eigenlayer). This separation allows for specialization at the different layers of the stack which is what Eigenlayer is taking advantage of by building a specialized data availability layer. The shift toward modular designs in blockchains means that teams will specialize in a task (e.g. data availability) rather than do everything in-house. Eigenlayer is poised well in this modular future, and chose a prong (data availability) that should have less competition than other prong’s (execution). Less competition means less teams to fight for market share over, which is good for Eigenlayer, as compared to Fuel for example who is competing at the execution layer, the most competitive prong of the trilemma (I love Fuel btw, it's one of the most interesting rollup projects in the space and they are building great things). My point is that Fuel is building a layer 2, which is a part of the execution layer. I believe that the innovation/competition will mainly play out between layer 2s (the execution layer) and not between data availability/settlement solutions. Not that there won't be competition at the consensus/data layer, but there will definitely be less. The consensus and data layers need to move slower and not break things, as everything is built on top of them. I can only think of a few protocols competing at the consensus/data layer (Ethereum, Celestia, EigenDA, Polygon Avail) whereas there are like 10-20 strong rollup teams already.

Sidenote: If you want to read more about modular blockchains, read my previous post:

Use Cases?

Let's talk about different products that can be built on top of Eigenlayer.

MEV Management (MEV Boost++): Block producers on MEV-boost can't make credible commitments outside of the scope of ethereum. On MEV-boost, builders send proposers headers to be signed along with a bid, and if the proposer double signs a header on MEV-boost, it can be penalized. As a proposer you have no agency - however this can change if you are re-staked via Eigenlayer. Because of EIP-1559 there is always extra space in the block, and so now, through Eigenlayer, you can exert proposer agency and include new transactions on top of a builders block. This process will increase censorship resistance on ethereum.

Sreeram Kannan: Censorship Resistance Via Restaking - SBC 2022

MEV Management (DeFi):

Block proposers could commit to do collateral liquidations.

Block proposers could take atomic arbitrage and funnel it back to Uni/Sushi pools.

Bridges: You can run light clients to other chains off-chain and make state claims on ethereum. The input is acted upon immediately without latency, and can later be slashed if wrong. This requires EVM implementation of light client run on fraud proofs.

Oracles: Instead of being secured solely by their own token, oracles can become secured with a larger amount of collateral and therefore have much better security. More security reduces the likelihood of an attack.

DA services (e.g. EigenDA): DA layers are crucial when it comes to scaling rollups, as data is the most expensive component of a rollup today. Rollups and DA layers are the future of scaling in this industry. Building a DA service on Eigenlayer makes a ton of sense for these reasons, and is probably why EigenDA will be the first protocol to launch on Eigenlayer. Unlike other DA layers currently being built, EigenDA can tap into existing ETH validators via Eigenlayer instead of bootstrapping their own set, which is a huge advantage.

Rollups (on EigenDA): Rollups are the future of scaling on ethereum, along with scalable DA layers. These rollups write commitments and settle on ethereum and by doing that they inherit the security of ethereum. EigenDA, via Eigenlayer, can inherit this trust as well from ethereum and provide massive DA services to rollups built on top. I predict that Eigenlayer will be a great place to build rollups for a few reasons:

Most of DeFi activity and liquidity is already on ethereum.

Eigenlayer is building its own data availability layer (EigenDA), which is great for data-hungry rollups. Data is the most expensive part of a rollup, and solutions like EigenDA can drastically lower costs for rollups. Having a highly scalable DA layer available to you as a rollup deploying on Eigenlayer cant be understated.

New VMs/Consensus Protocols: Eigenlayer is able to offer permissionless innovation down the stack, instead of only at one layer. This allows for innovation at layers other than the application layer, such as the consensus layer.

Single Slot Finality: Something we don't have yet in ethereum is fast finality. It is possible that if enough validators opt-in to Eigenlayer and agree not to fork a block we can get single slot finality in a purely opt-in fashion. This is possible because of the economic security that is underwriting the finality of the chain, provided by Eigenlayer. Important to note that these interactions with Gasper need careful consideration.

Funding

On August 30th, 2022 Eigenlayer raised an early stage VC round of 14.4 million.

Who is behind it?

Sreeram Kannan is the founder of Layr Labs, which is building Eigenlayer. He is an associate professor at the University of Washington in Seattle where he leads the UW blockchain club. There, he has worked on consensus protocols, new distributed systems and scalability. One of his key takeaways from the ethereum ecosystem was the amount of permissionless innovation available at the smart contract layer. “Anybody can come and deploy a new contract, and they don't need to be trusted as they are borrowing decentralized trust from ethereum. People talk about things like modular blockchain, and I think of ethereum as the first modular blockchain. It modularized trust so Dapps could consume it, which led to the pseudonymous economy. You don't need to be trusted as a Dapp creator, as the blockchain underneath is trusted.”

Sreeram seems to be heavily driven by first principles; he talks a lot about how the core value proposition of blockchains is decentralized trust. He has lots of beliefs synonymous with crypto OGs although he entered the space much later in 2018. In my opinion, a founder that embraces these old school crypto beliefs is one that you should pay attention to. They are ever more necessary as the space grows bigger and bigger and these beliefs are challenged, or worse, attacked. I, along with many others, value these OG crypto principles and I think Sreeram is a great embodiment of them. I would follow where he goes in the space.

Thanks For Reading!

You can follow me on twitter: https://twitter.com/FinanceKidd